[Part 3 of a 3 part series]

See also:

Part 1: Configure Apache with mod_cluster load balancer on Ubuntu

Part 2: Wildfly domain management

Introduction

This is the third and final article in a series on how to set up and configure a clustered environment. In this post, we’ll walk through the basic steps required to set configure the last pieces of modcluster in Wildfly and also test session replication in our cluster.

If you haven’t read the post on Wildfly domain management, we recommend doing so before continuing with this article.

Just to recap,

in part 2 of this series we configured two VMs with Wildfly in domain mode. We were working in the application network of the

deployment model you see below. In this article, we’ll tie the loose strings together and will be looking at the communication channels between the modcluster load balancer (domain network) we set up in part 1 of this series and our Wildfly cluster (application network) we set up in part 2 of this series.

Are your ready?

We’ll start off by configuring the modcluster subsystem in our Wildfly domain.

Modcluster subsystem configuration

In the domain.xml file of both master and slave hosts.

Modify the mod-cluster-config section for the full-ha profile. We’re going to add the proxy-list attribute which is basically telling the host there is a domain cluster set up for load balancing. The mod_cluster will also then pick up the broadcasts from these hosts and register them in its cluster. The value of the proxy-list attribute is the address of where our mod_cluster module is listening, which is :6666. After our changes, the mod-cluster-config section will look like this:

mod-cluster-config

<subsystem xmlns=”urn:jboss:domain:modcluster:1.2″>

<mod-cluster-config advertise-socket=”modcluster” connector=”ajp”

proxy-list=”<apache-host-ip>:6666″>

<dynamic-load-provider>

<load-metric type=”cpu”/>

</dynamic-load-provider>

</mod-cluster-config>

</subsystem>

Cluster-user and cluster-password credentials

Finally, we need to add the admin user’s credentials to the HornetQ server’s messaging subsystem.

Modify domain.xml on both master and slave hosts. Find the section as shown below and change accordingly:

domain.xml

<subsystem xmlns=”urn:jboss:domain:messaging:2.0″>

<hornetq-server>

<clustered>true</clustered>

<cluster-user>admin</cluster-user>

<cluster-password>admin<cluster-password>

…

</hornetq-server>

</subsystem>

The and credentials are used to make connections between cluster nodes. These connections are used to move messages from one node to another for load-balancing purposes. However, they can technically be used by any remote client to connect to the server which is why you should change them from the default. It is possible to encrypt the admin user’s password, but this is not in the scope of this guide. If you are interested, take a look here or contact us.

Session replication testing

This verification test can only be done after you have completed the part 1 of the series on how to configure Apache 2.4.7 with mod_cluster load balancer on Ubuntu 14.04 Go to part 1 as well as part 2, which was about Wildfly domain management Go to part 2.

After you have completed the above-mentioned guides, and pointed the mod_cluster subsystem of the respective hosts to the mod_cluster address of machine Apache is hosted on, navigate to:

cluster-demo

Put

You’ll be presented with the Hello World screen we verified in Deployment section of Part 2: Wildfly domain management. Notice how we didn’t add a port number to above URL. The cluster-demo application we deployed to master has a registered web context on the cluster after the nodes in our Wildfly cluster and the load balancer have established connections to each other. Next, we’ll call put.jsp to put the current time into the session variable like so:

cluster-demo.put

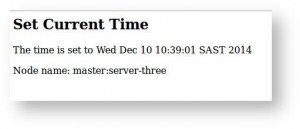

You’ll see that the time has been set – make a note of the time as we’ll verify it is correct when we fetch the time from the session. Look at the node that served the request, because this will be the node that we will shutdown from the management console to test the session replication.

Below is an example of the response:

Get

In the management console on 192.168.56.101:9990. Under the “Domain” tab and “Overview” view, shutdown the server that served the request when you set the time. In my case it was master:server-three, so I’ll shut it down.

After the server has been shutdown, we call get.jsp like so:

cluster-demo.put

If everything went well you should see the time stamp displayed when we called put.jsp. Additionally, you should also see that slave:server-three-slave served the request. Below is an example of the response:

And that is it! We have successfully tested our Wildfly cluster by deploying once into the domain via our domain controller (master). The domain controller then propagated the deployable artifact to all the domain members. We then used our load balancer to access the deployed application after which we checked if session replication is working across hosts by setting the current time into a session variable, shutting down the master node that served that request and then fetching the set time from the session variable. We then saw how the slave host served the fetch request and successfully fetched the time that was set by the master host into the session variable.

What have we covered in this series?

In summary

- In part 1 of this series, we looked at how to configure Apache 2.4.7 with the mod_cluster load balancer on Ubuntu. We did this so we could have a communication channel to forward requests from Apache (httpd) to one of a set of Application Server nodes; such as Wildfly.

- In part 2 of this series, we looked at how to set up Wildfly in domain mode in support of our clustered environment. We did this to enable our application to have high availability, as well as a central point of control and configuration for multiple server nodes.

- In the last installment of this series, we brought the first two parts together by configuring Wildfly’s modcluster subsystem. We then closed off by testing session replication in our clustered environment.